linkml-datalog

Validation and inference over LinkML instance data using souffle

Requirements

This project requires souffle

After installing souffle, install the python here is a normal way.

Until this is released to pypi:

poetry install

Running

Pass in a schema and a data file

poetry run python -m linkml_datalog.engines.datalog_engine -d tmp -s personinfo.yaml example_personinfo_data.yaml

The output will be a ValidationReport object, in yaml

e.g.

- type: sh:MaxValue

subject: https://example.org/P/003

instantiates: Person

predicate: age_in_years

object_str: '100001'

info: Maximum is 999

Currently, to look at inferred edges, consult the directory you specified in -d

E.g.

tmp/Person_grandfather_of.csv

Will have a subject and object tuple P:005 to P:001

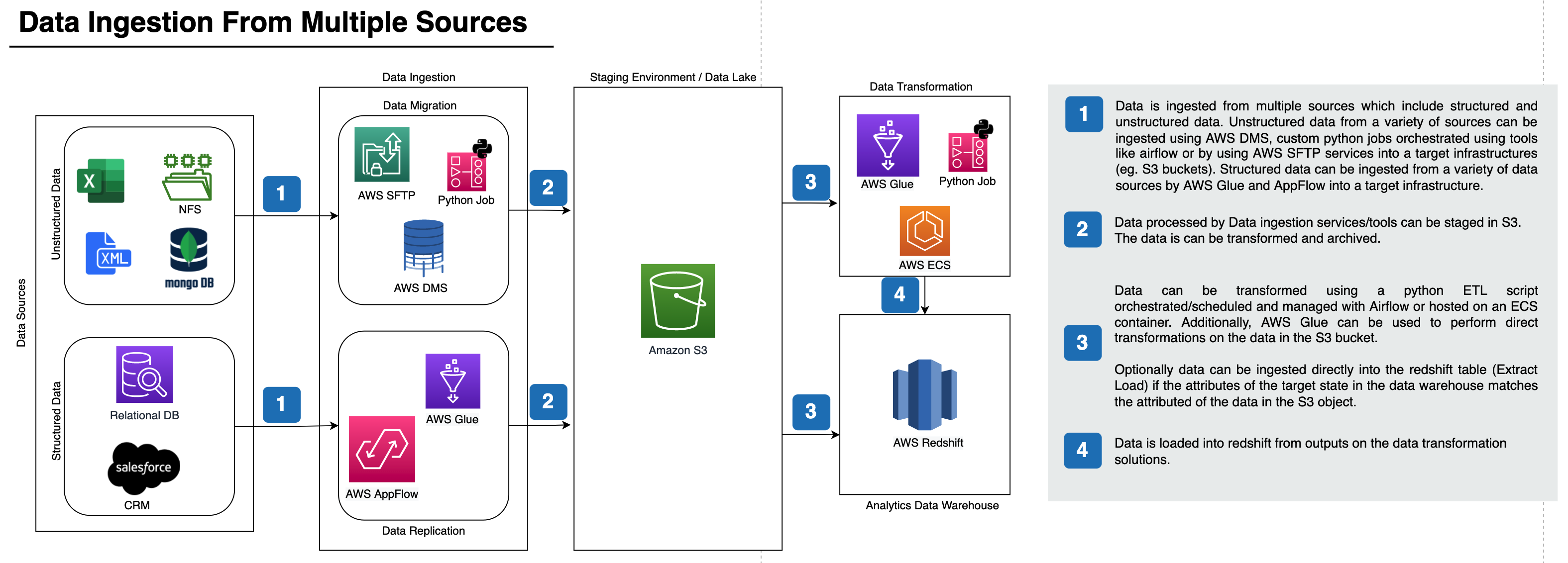

How it works

- Schema is compiled to Souffle DL problem (see generated schema.dl file)

- Any embedded logic program in the schema is also added

- Data is converted to generic triple-like tuples (see

*.facts) - Souffle executed

- Inferred validation results turned into objects

Assuming input like this:

classes:

Person:

attributes:

age:

range: integer

maximum_value: 999

The generated souffle program will look like this:

.decl Person_age_in_years_asserted(i: identifier, v: value)

.decl Person_age_in_years(i: identifier, v: value)

.output Person_age_in_years

.output Person_age_in_years_asserted

Person_age_in_years(i, v) :-

Person_age_in_years_asserted(i, v).

Person_age_in_years_asserted(i, v) :-

Person(i),

triple(i, "https://w3id.org/linkml/examples/personinfo/age_in_years", v).

validation_result(

"sh:MaxValueTODO",

i,

"Person",

"age_in_years",

v,

"Maximum is 999") :-

Person(i),

Person_age_in_years(i, v),

literal_number(v,num),

num > 999.

Motivation / Future Extensions

The above example shows functionality that could easily be achieved by other means:

- jsonschema

- shape languages: shex/shacl

In fact the core linkml library already has wrappers for these. See working with data in linkml guide.

However, jsonschema in particular offers very limited expressivity. There are many more opportunities for expressivity with linkml.

In particular, LinkML 1.2 introduces autoclassification rules, conditional logic, and complex expressions -- THESE ARE NOT TRANSLATED YET, but they will be in future.

For now, you can also include your own rules in the header of your schema as an annotation, e.g the following translates a 'reified' association modeling of relationships to direct slot assignments, and includes transitive inferences etc

has_familial_relationship_to(i, p, j) :-

Person_has_familial_relationships(i, r),

FamilialRelationship_related_to(r, j),

FamilialRelationship_type(r, p).

Person_parent_of(i, j) :-

has_familial_relationship_to(i, "https://example.org/FamilialRelations#02", j).

Person_ancestor_of(i, j) :-

Person_parent_of(i, z),

Person_ancestor_of(z, j).

Person_ancestor_of(i, j) :-

Person_parent_of(i, j).

See tests for more details.

In future these will be compilable from higher level predicates

Background

See #196